The ethics of generative AI: how to safely exploit this technology?

New discoveries about the capabilities of generative AI are announced every day. However, these discoveries are accompanied by concerns about how to regulate the use of generative AI. Legal proceedings against OpenAI illustrate this necessity.

Because as AI models evolve, legal regulations are always in a gray area. What we can do now is to have in mind the challenges that accompany the use of this powerful technology, and to know more about the safeguards that already exist.

Using AI to combat AI manipulation

Whether it’s lawyers citing fake information created by ChatGPT, students using AI chatbots to write their assignments, or even photos of Donald Trump’s arrest generated by AI, it’s becoming increasingly difficult to distinguish what is real content from what was created by generative AI. And to know where the limit of the use of these AI assistants lies.

Researchers are studying ways to prevent the abuse of generative AI by developing methods to use it against itself in order to detect cases of manipulation. “The same neural networks that generated the results can also identify these signatures, almost the markers of a neural network,” says Sarah Kreps, director and founder of the Cornell Tech Policy Institute.

One of the methods of identifying these signatures is called “watermarking”, which consists of placing a kind of “buffer” on the results created by the generative AI. This makes it possible to distinguish the contents that have been submitted to the AI from those that have not been submitted. Although studies are still underway, this could be a solution to distinguish content that has been modified by generative AI.

Sarah Kreps compares the use of this marking method by researchers to that of teachers and professors who analyze the works submitted by students to detect cases of plagiarism. It is possible to “scan a document to find this type of signatures”.

“OpenAI is thinking about the types of values it encodes in its algorithms so as not to include erroneous information or contrary or contentious results,” says Sarah Kreps to ZDNET.

Digital culture education

Computer classes at school contain lessons on how to learn how to find reliable sources on the internet, how to make citations and how to conduct research correctly. The same must be true for AI. It is necessary to train the users.

Today, the use of AI assistants such as Google Smart Compose and Grammarly is becoming common. “I think these tools are going to become so ubiquitous that in five years, people will wonder why we had these debates,” says Sarah Kreps. And while waiting for clear rules, she believes that “teaching people what they should look for is part of digital culture that goes hand in hand with a more critical consumption of content”.

For example, it is common for even the most recent AI models to create factually incorrect information. “These models make small factual errors, but present them in a very credible way,” explains Ms. Kreps. “They invent quotes that they attribute to someone, for example. And we must be aware of this. The careful examination of the results makes it possible to detect all this”.

But to do this, teaching AI should start at the most basic level. According to the Artificial Intelligence Index Report 2023, the teaching of AI and computer science from kindergarten to senior high school has progressed worldwide since 2021 in 11 countries, including Belgium.

The time allocated to AI-related topics in classrooms includes, among others, algorithms and programming (18%), data literacy (12%), AI technologies (14%), AI ethics (7%). In an example of a curriculum in Austria, UNESCO indicates that “students also understand the ethical dilemmas associated with the use of these technologies”.

Beware of prejudices, here are concrete examples

Generative AI is able to create images from the text entered by the user. This has become problematic for AI art generators such as Stable Diffusion, Midjourney and DALL-E, not only because they are copyrighted images, but also because these images are created with obvious gender and racial biases.

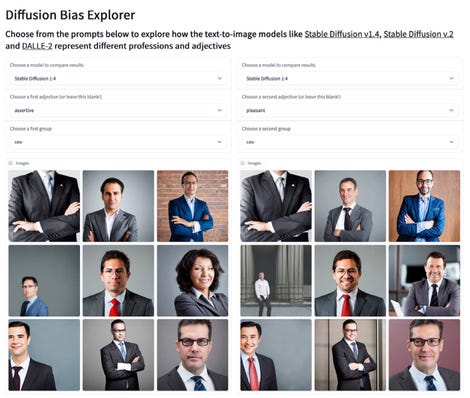

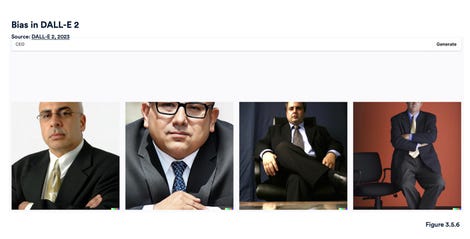

According to the Artificial Intelligence Index Report, “The Diffusion Bias Explorer” of Hugging Face positioned adjectives in front of professions to see what types of images The Diffusion would produce. The generated images revealed how a profession is coded with certain descriptive adjectives. For example, “CEO” significantly generates images of men in suits when adjectives such as “pleasant” or “aggressive” are entered. DALL-E also achieves similar results, producing images of older and more serious men, in suits.

Images from the Distribution with the keyword PDF and different adjectives.

Images of a “CEO” generated by DALL-E.

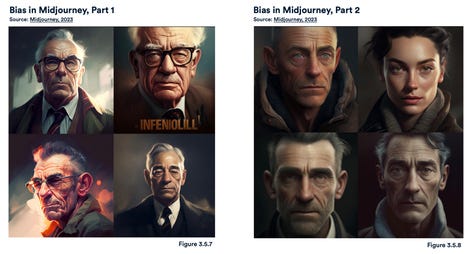

Midjourney seems to have less bias. When asked to produce an “influential person”, he generates four white men of a certain age. However, when AI Index asks him the same thing a little later, Midjourney produces the image of one woman out of the four he generated. However, requests for images of “someone smart” generated four images of white men, elderly and wearing glasses.

Images of an “influential person” generated by Midjourney.

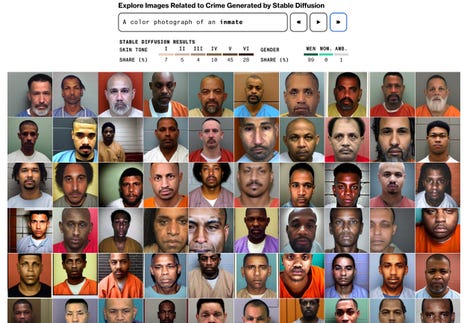

According to a Bloomberg article on the bias of generative AI, these text-image generators also present an obvious racial bias. More than 80% of the images generated by the Broadcast with the keyword “detainee” contain people with darker skin. However, less than half of the American prison population is made up of people of color.

Stable broadcast images of the word “detainee”.

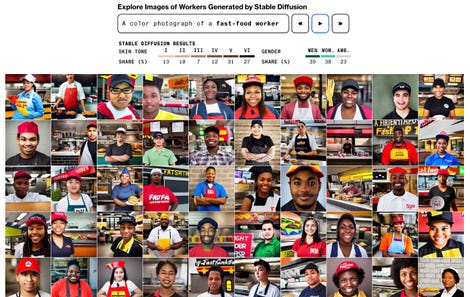

In addition, the keyword “fast food employee” gives images of people with darker skin in 70% of cases. In fact, 70% of fast food workers in the United States are white. For the keyword “social worker”, 68% of the images generated represented people with darker skin. In the United States, 65% of social workers are white.

Images from the stable dissemination of the word “fast food employee”.

How to regulate “dangerous” questions?

What topics should be banned from ChatGPT? “Should people be able to learn the most effective assassination tactics via an AI”? Dr. Kreps asks. “This is just an example, but with an unmoderated version of AI, you could ask this question or ask how to make an atomic bomb”.

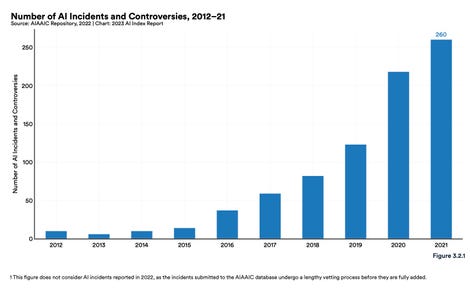

According to the Artificial Intelligence Index Report, between 2012 and 2021, the number of incidents and controversies related to AI increased 26 times. With the new capabilities of AI causing more and more controversy, it is urgent to carefully examine what we are introducing into these models.

Regardless of the regulations that will eventually be implemented and when they will be realized, the responsibility lies with the human who uses AI. Rather than fearing the growing capabilities of generative AI, it is important to focus on the consequences of the data that we introduce into these models so that we can recognize cases where AI is being used in an unethical way and act accordingly.

Source: “ZDNet.com “